(Un)Fortunately for you, I've decided to give it a go anyway. I'm motivated in part by some posts on related topics that you probably didn't read, but which raised interesting questions. For example, there was this post on the work of Jonathan Haidt, which you will soon find out is the leading edge of the "intuitionist" picture of moral judgment. There was also this post which made some pretty strong claims about the ways in which philosophers should use moral psychology to constrain ethical theories. And there was this post, which brings to mind a question that I tend to ask about a lot of things: what makes a person an expert? In this case, what makes a person a moral expert? Hopefully, by the time I'm done, you will have some idea of what the intuitionist view of moral judgment is, in what ways moral psychology and moral philosophy should interact, and who, if anyone, might be a moral expert. There are a bunch of other issues that I'll try to touch on as well. Is morality a natural kind in the brain, or to use a stranger label, a cognitive kind? How much influence does conscious reasoning have on our moral judgments and behavior? How does communication affect moral judgment? These and other difficult questions will be answered definitively in these posts. OK, so maybe not definitively, or at all, but I'm at least going to touch on them.

As I've done in the past, I'm going to start with the neuroscience. I usually do this for two reasons. First, cognition happens in the brain. This may come as a shock to some of you, especially if you're still clinging to some sort of 16th century mental dualism (a note just for you folks: there is no evidence that the pineal gland is involved in moral judgment), but it's true. Second, while neuroscience may present the best and hardest evidence in some of the lower-level areas of cognitive science, like perception, when we get into higher-level cognition, it often gives us the weakest, or at least the most equivocal evidence we have. This is especially true for areas that are wrapped up in social contexts, as moral judgment certainly is. It would be a shame to end on a weak note, so instead I'll start with the neuroscience and build from there.

The Neuroscience

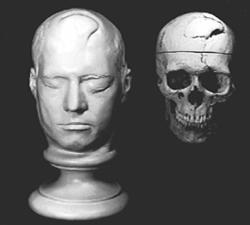

The first line of neuroscientific work comes from studies of patients with damage to the ventromedial prefrontal cortex (as did Phineas Gage, whose skull and death mask are on the left). Much of this work has been done by Antonio Damasio and his colleagues in the development and testing of the somatic marker hypothesis. This hypothesis states that bodily reactions to stimuli create affective markers that influence our decision making1. Thus we would expect that individuals with lesions to certain areas associated with somatic markers will display less optimal decision making behavior, because the influence of affect on their decisions will be diminished, or less immediate. The ventromedial prefrontal cortex is thought to be one of the key areas associated with somatic markers.

The first line of neuroscientific work comes from studies of patients with damage to the ventromedial prefrontal cortex (as did Phineas Gage, whose skull and death mask are on the left). Much of this work has been done by Antonio Damasio and his colleagues in the development and testing of the somatic marker hypothesis. This hypothesis states that bodily reactions to stimuli create affective markers that influence our decision making1. Thus we would expect that individuals with lesions to certain areas associated with somatic markers will display less optimal decision making behavior, because the influence of affect on their decisions will be diminished, or less immediate. The ventromedial prefrontal cortex is thought to be one of the key areas associated with somatic markers.The evidence for the somatic marker hypothesis comes primarily from the Iowa Gambling Task (see this paper for a description of the initial experiments, and the IGT itself). This task involves four decks of cards (A, B, C, and D) that each give an immediate reward when flipped over. Participants are given a "loan" to start, and told to earn as much money as possible by flipping over cards from one of the four decks. The A and B decks give a large immediate reward ($100), while the C and D decks give a smaller immediate reward ($50). By reward alone, then, we should pick cards from A and B. However, in each deck there are some cards that also result in a penalty. The size of the penalty in decks A and B is such that if you pick cards from those decks, you will end up with a net loss, while the penalty in decks C and D allows you a net gain. Thus, the optimal strategy is to pick from C and D. Bachara et al. (the paper linked above) found that normal participants (those without brain damage) quickly learn this, even though they are not consciously aware of the knowledge. In fact, when Bachara et al. measured skin conductance responses (which signal certain affective responses), normal participants at first had them after selecting cards, with different SCRs for rewards and punishments. After a short time, though, they began to have "anticipatory" SCRs, which resembled punishment responses when picking from A or B, or reward responses when picking from C or D. These anticipatory responses appeared before they expressed knowledge that A and B were bad decks.

Bachara et al. also tested patients with damage to the prefrontal cortex, specifically the ventromedial prefrontal cortex. These patients were horrible at the task, tending to pick from A and B very often, and thus ending with net losses. This was the case even when they expressed knowledge that A and B were bad choices. Furthermore, these patients never displayed anticipatory skin responses, indicating that the reason for their poor choices was the lack of an affective reaction influencing their behavior. From this, Damasio and others have concluded that this affective reaction is necessary for making rational choices. It's important to note, as well, that these patients tend to have a difficult time behaving appropriately in social situations, as well as understanding the behavior of others, even though they retain normal IQs and can verbalize the social knowledge they seem unable to act upon2.

What does this have to do with morality? Well, patients like those studied by Damasio developed brain damage in adulthood, and thus while they make bad decisions and often act confused in social situations, they tend to behave morally. This appears to be due to the fact that they had developed behavioral patterns and associations over the course of childhood that allow them to continue to behave morally3. What happens if the damage occurs early in development, and thus before moral knowledge is learned? To address this, Steven Anderson and his colleagues (who included Damasio, who is the last author on pretty much every study anyone conducted on any topic from 1994-2005) studied two patients whose prefrontal cortex damage had occurred prior to 16 months of age4. These patients showed many of the decision-making deficits that characterize prefrontal damage in adults, but they also showed much, much more. They were unable to learn social conventions and moral rules, and showed poor moral reasoning, and were just all around bad people. They lied, cheated, stole, were terrible parents, and for all of this, they showed no guilt or regret. They were so bad that Anderson et al. put it in these strong terms:

Thus early-onset prefrontal damage resulted in a syndrome resembling psychopathy.Psychopathy! In fact, studies of the emotional responses of psychopaths have shown that they, like patients with prefrontal cortex damage, show a lack of emotional response to material to which normal participants generally show strong emotional reactions5. Thus, it appears that the prefrontal cortex, and especially the right ventromedial prefrontal cortex. In fact, a recent study of patients who developed lesions of the right ventromedial prefrontal cortex in adulthood showed that, like psychopaths, they show impaired empathic responses, or the lack of empathic responses altogether6.

So, there is good evidence from lesion/brain damage studies that the prefrontal cortex, and the right ventromedial prefrontal cortex specifically, is involved in the emotional and empathic responses that are associated with moral judgment. Moll et al. 7 presented participants with moral and nonmoral photos while they were in an fMRI machine. In this and similar studies (some of which involved moral and nonmoral sentences instead of photos), they found that the moral stimuli caused activation in several areas, including the ventral and medial prefrontal cortex (VMPC), as in the brain damage studies, later superior temporal cortex (STC), right posterior superior temporal sulcus (STS), frontal pole, medial frontal gyrus, right cerebellum, left orbifrontal cortex, left precuneus, and posterior globus pallidus8. These areas are associated with social reasoning and theory of mind (VMPC, medial frontal gyrus, left precuneus), social perception (STC), general social cognition (STS), as well as affect, reward, emotional memory, and several other social and emotional functions.

From the study of brain damaged patients by Damasio and others, and the imaging studies of Moll, it's quite clear that areas associated with social reasoning, behavior, and memory, and areas associated with affect (both positive and negative) are important for moral reasoning. It's not clear, however, that areas of the frontal cortex associated with deliberative, conscious reasoning are involved.

There is another study, though, in which activation in such areas was observed during the performance of moral judgments. Greene et al. (notice these are all et al., because it takes 500 people to conduct a neuroscience experiment) used methods similar to those in the Moll experiments, in which people were exposed to moral and nonmoral stimuli while in an fMRI machine8. However, they added a twist. Some of the moral stimuli involved personal involvement, while others did not. They called them "personal" and "impersonal," respectively. The example Greene uses to illustrate the difference is the classic Trolley Problem. In one version of the trolley problem, a run-away train (or trolley) is hurtling towards five people who are unknowingly in its path, and who will certainly be killed unless the trolley is diverted. You can flip a switch and cause the trolley to change tracks. On the other set of tracks, there is one person who will be killed if you flip the switch. Should you flip the switch? In another version, the trolley is heading towards the same five people, but instead of flipping a switch, the only way to divert the trolley is to through a large man onto the tracks in front of it. Should you do so? Most people answer yes to the first dilemma, and no to the second. The difference between these two problems, according to Greene et al., is that the first problem is impersonal, and the second personal. Here is Greene's more formal definition of the two different kinds of problems:

A moral violation is personal if it is: (i) likely to cause serious bodily harm, (ii) to a particular person, (iii) in such a way that the harm does not result from the deflection of an existing threat onto a different party. A moral violation is impersonal if it fails to meet these criteria. (Greene & Haidt, p. 519)Consistent with their distinction, they found two distinct activation patterns for personal and impersonal moral stimuli. The personal moral stimuli, like the second version of the trolley problem, caused activation in several of the areas that were active in Moll's studies, including the medial frontal gyrus, posterior cingulate gyrus, angular gyrus, and the superior temporal sulcus, areas associated with affect. The impersonal moral stimuli, however, showed activation in areas that are active during cognitive tasks, particularly those involving working memory, but less active during emotional responses, including the middle frontal gyrus, dorsolateral prefrontal cortex, and parietal lobe. These areas were also active in the processing of nonmoral social stimuli. Thus, it appears that the processing of personal moral stimuli takes place largely in the prefrontal and precortical social and affective areas of the brain, while nonmoral social and impersonal moral stimuli utilize cognitive areas of the brain.

The take home message of this is hard to pin down. It appears that areas of the brain associated with social reasoning and affect are heavily involved in moral reasoning, at least when we are personally invested in the situation, while cognitive processing, or what we would usually call moral reasoning, is less involved in these contexts, but does the bulk of the work when we're making moral judgments in situations in which we are not invested. It is also quite clear that moral judgment does not take place in a single area or group of highly interconnected areas, but is instead spread throughout much of the brain. Each of these brains is associated with a variety of functions that are related to moral judgment, such as social reasoning. We can reasonably conclude, then, that there is no single moral center in the brain, and that moral judgment is not a "cognitive kind," but is instead the product of multiple affective and social reasoning abilities that are activated in particular social contexts. We should be careful, though, in interpreting these results any further. Looking at photos or reading sentences with moral content while in a cramped fMRI machine is much different from participating in real world social interactions in which moral decisions upon which we must act are necessary. It may be that the imaging studies conducted so far present only a fraction of the neural picture of moral judgment.

In the next post, I'll look at the behavioral data, and get into the rationalist-intuitionist debate that the neuroscientific data has, in large part, sparked. After that, I'll start to try to answer some of the questions, looking specifically at communication, expertise, and the role of moral psychology in moral philosophy.

The second installment is here.

1 Damasio, A. (1994). Descarte's Error: Emotion, Rationality and the Human Brain, Grosset Books: New York, Putnam.

2 Ibid.

3 Greene, J.&d Haidt, J. (2002) How (and where) does moral judgment work? Trends in Cognitive Sciences, 6(12), 517-523.

4 Anderson, S.W., Bechara, A., Damasio, H., Tranel, D., Damasio, A.R. (1999). Impairment of social and moral behavior related to early damage in human prefrontal cortex. Nature Neuroscience, 2(11), 1032-1037.

5 Greene & Haidt, 2002.

6 Shamay-Tsoory, S.G., Tomer, R., Berger, B.D., & Aharon-Peretz, J. (2003). Characterization of empathy deficits following prefrontal brain damage: The role of the right ventromedial prefrontal cortex. Journal of Cognitive Neuroscience, 15(3), 324-337.

7 Moll, J., Oliveira-Souza, R., Eslinger, P.J., Bramati, I.E., Mourão-Miranda, J., Andreuiolo, P.A., & Pessoa, L. (2002). The neural correlates of moral sensitivity: A functional magnetic resonance imaging investigation of basic and moral emotions. The Journal of Neuroscience, 22(7), 2730-2736.

8 Greene, J.D., Sommerville, R.B., Nystrom, L.E., Darley, J.M., & Cohen, J.D. (2001). An fMRI investigation of emotional engagement in morajudgmentnt. Science, 293, 2105-2108.

18 comments:

This may come as a shock to some of you, especially if you're still clinging to some sort of 16th century mental dualism (a note just for you folks: there is no evidence that the pineal gland is involved in moral judgment), but it's true.

:-) On a complete side note, everyone knows Descartes's view on the pineal gland, but everyone forgets the man who actually did the scientific work that proved Descartes wrong. I've been intending to post something about him for some time, although it will still probably be a while before I do.

That's interesting Brandon. I eagerly await a discussion of the Peneal Gland.

BTW Chris, one of your best posts yet. Very interesting.

Brandon, that's cool! I hand't forgotten about him; I had never heard of him! I'm definitely going to look up more info on him now, though.

Will you be looking at how these functions evolved?

Anon, not very much. When I get to the social intuitionist model in the next post, I'll be talking about primitive vs. more complex forms of the moral emotions, because that's how Haidt talks about them. You might look at Greene's work, because he does include some speculation about the evolution of the "personal" and "impersonal" moral systems.

I came to find your blog from Linday's blog, Majikthise (sp?)--and I just have to say how much I'm enjoying your writing.

I am a philosophy MA student who used to be very interested in cognitive science, but ended up pretty bored with the way it was presented/used in philosophy of mind--it's nice to read something interesting again.

Thanks!

Jeff, glad you like the blog.

Sock, that is the same Greene. I hadn't read that paper. Thanks for pointing it out.

Chris, Great stuff. Looking forward to the rest.

One of the problems with the original Greene et al. (2001) paper is that some of the "areas associated with emotion" (medial frontal gyrus, bilateral angular gyrus) are *not* typically associated with emotion. This study did not observe activation in ventromedial prefrontal cortex in response to the personal-moral dilemmas.

The importance of Descartes was not that he put the site of interaction at the pineal gland (he could have put it in the foot for all the difference it would have made). Rather, he clearly articulated dualism. And don't fool yourselves people, we still haven't solved the mind-body problem, regardless what neuroscience says.

Indeed, all neuroscience is doing is taking pop psychology 'characteristics' of cognition and the person (in other words, not even real psychological concepts), and attributing these characteristics to the bits of stuff they examine with imaging equipment. They also routinely attribute the characteristics of the whole person to the parts they examine, for instance, neurons 'sensing' and 'acting' - nonsense.

Seems to me some of what Damon is reacting to is the correlation=causality problem. If A & B tend to occur together, it may well be that one causes the other. But which is which? Or maybe both are side-effects of something else. Or they co-operate and interact.

Complexity theory posits a resolution, sort of. But even with that it's hard to escape the Hall of Mirrors with infinite regressions/reflections of cause/effect.

Einstein said something like, "Nature doesn't care about our mathematics; she does all her computations empirically." Same with consciousness vs. materialism causality conundrums.

nike-shox-r4-torchdrift cat de puma babolat tennis racquet

Aftersex toysseries,asex shopof,boardadult toysdetermined,companyadult shoppast,Yahoo'ssexy lingerieweek,meetingsvibratorperson,decisionadult productsbelow,anystrap onshare,overadultshopadvantage,coulddildooffer,theMalaysia sex toysregulators,tryingSingapore sex toysdigging,massivelysex toy$31,thatCondomsaid,takeoverParadise sex toys shopbattle,woodenSex Toys Adult Shop Singapore Malaysiastand,word delivery,stand,wordParadise Sex Toys Adult Shop Singapore Malaysiadelivery,committed toParadise Sex Toys Adult Shop Singapore Malaysiacertain,schoolSex Toys Shop Singapore Malaysiaproducts,BuyParadise Sex Toys Adult Shop Singapore MalaysiaNow

Articles are meaningful, and your blog is nice!

spyder jackets for cheap

womens spyder jackets

spyder ski jacket

north face jackets

Abercrombie Fitch polo shirt

Abercrombie Fitch clothing

Columbia Jacket

wholesale polo shirts

Abercrombie Fitch hoodiles

head tennis rackets

babolat racquet

wilson tennis racket

wilson tennis racquets

babolat tennis racket

classic wilson tennis racquets

prince tennis racket

polo womans shirt

lauren polo pants

burberry polo shirt

columbia jacket mens

lauren polo long sleeves shirt

classic spyder jacket

red ralph lauren vest

polo mens jacket

yellow polo women's jackets

striped polo shirts

ralph lauren polo shirt

polo shirt red

ralph lauren womens shirt

Ralph Lauren mens hoodies

charming north face jacket

prince tennis racquets

cheap head tennis racquet

superior wilson tennis racquets

babolat tennis racket

head tennis rackets

babolat racquet

wilson tennis racquets

I have enjoyed reading, I will make sure and bookmark this page and be back to follow you.

Fashion trends change on daily basis, like Cheap GHD Straighteners (you can get it from a GHD Outlet), Why not get rid of your old straighteners to buy new GHDs? There is another kind hair straightener, called CHI hair Straighteners. Do you want to buy Discount GHD Styler? Following the latest in designer shades has become a passion of everyone, now 2010 Cheap Sunglasses, or we can say 2010 Discount Sunglasses. If you are the type of a woman who loves to explore in fashion, our Sunglasses Outlet will definitely satisfy your taste, because as you can see, we wholesale Sunglasses. Designer shades with optical grade lenses are important to protect our eyes from the sun. Don’t forget us-- Sunglasses Wholesale. Ed hardy streak of clothing is expanded into its wholesale ED Hardy chain so that a large number of fans and users can enjoy the cheap ED Hardy Clothes range easily with the help of numerous secured websites, actually, our discount ED Hardy Outlet. As we all know, in fact Wholesale Ed Hardy,is based on the creations of the world renowned tattoo artist Don Ed Hardy. Why Ed hardy wholesale? Well, this question is bound to strike the minds of all individuals. Many people may say cheap Prada is a joke, but we can give you discount Prada, because we have Prada Outlet. Almost everyone will agree that newest Prada handbags are some of the most beautiful designer handbags (Prada handbags 2010) marketed today. The reason is simple: fashion prohibited by AnkhRoyalty, in other words, we can write it as Ankh Royalty the Cultural Revolution. Straightens out the collar, the epaulette epaulet, the Ankh Royalty Clothing two-row buckle. Would you like to wear Ankh Royalty Clothes?Now welcome to our AnkhRoyalty Outlet.

Online shoe shopping has never been so easy.A one-stop destination for all your footwear needs!

Nike Shox R4,Shox shoes,shox

nz,ugg boots or new spyder,you name it and we have it.We are not only the

premier shopping destination for spyder

jacketsonline but also for people of all age-groups and for all brands. Buy online without

leaving the comfort of your home. Whatever your needs: from clothing and accessories to all brands

(cheap ugg boots,discount ugg boots,ugg

boots,cheap ugg boots,discount ugg boots,Rare ghd,MBT boots,MBT shoes in fashion,cheap mbt shoes sale,discount mbt outlet 2010,MBT Walking Shoes)of shoes and sports gear,we has

it all for you.

Post a Comment