Anne crutched the apple to Steve.Given a little context, humans can understand this sentence very easily, because we can use our knowledge of crutches, apples, and our folk theories of physics to help us interpret it. How to get a computer to understand this, however, is one hell of a problem. The difficulty lies in getting all of that world knowledge in, and making it accessible in the right contexts. The second problem may be the easier one, but the first one has always seemed damn near intractable, and until you solve it, the second one has to wait.

Enter Google, and two computer scientists who had the insight that its vast network of interconnections might serve as just the sort of knowledge base that computers need to begin to look, well, like us. They basically measure how many hits a word gets in a google search, along with how many hits it gets when combined with another word, and use that to compute a score that measures the similarity between words. Out of this, semantic knowledge emerges. Or at least, that's the claim.

The idea that the interconnections between words in a corpus are somehow related to their meaning, and thus knowledge, is not new. Latent Semantic Analysis (LSA) has been around since the late 90s. It analyzes a large corpus of text, and looks at how often two words co-occur (and how close together they are when they do), along with how often they occur without each other, and uses this score to place the words in a high-dimensional space. Similarity relations between different words, as measured by their distances from each other in that space, can then be used to approximate meaning. LSA is very powerful, in that it simulates human performance on many semantic tasks, such as lexical priming, word sorting, categorization, and even verbal SAT questions.

What's new with the use of Google is the access to an unprecedentedly vast source of co-occurrence information: the entire internet. The technical details are pretty, well, technical, and I have to admit I am not qualified to critically evaluate most of the paper (linked above, and again below). When I read the words Kolmogorov complexity, my eyes tend to roll up into the back of my head, and I lose consciousness for entire sections of AI papers. Much of the work in this paper is built around Kolmogorov complexity. However, despite my inability to comprehend everything in the paper, I think I've grasped the basics (it's enough like LSA, with which I am intimately familiar, for me to get the gist). Still, I think it's incredibly cool. Especially when we consider the experiments they ran.

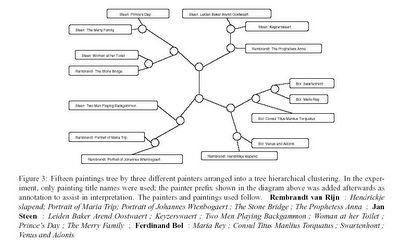

In one of the experiments, they googled the names of paintings by three 17th century Dutch painters, Rembrandt, Steen, and Bol. Using their normalized Google distance calculation, the computer was able to sort the paintings roughly by painter (see the figure below - and see the paper to get a description of the method for deriving the tree), without being given any information about who painted what. They also used it to learn the meaning of electrical terms and words like "religious," to sort color and number names, to distinguish emergencies from "near emergencies," and distinguish prime numbers from non-prime numbers.

Click for a larger view.

Whether anyone will ever be able to use this method to get a computer to understand "Anne crutched the apple to Steve," I do not know. However, given the size of the knowledge base that this method uses, it's probably the best method anyone's come up with so far, and if it turns out to be successful on some simpler problems, I don't doubt that AI researchers will improve upon it and develop programs that can use it to do all sorts of nifty things.

Once again, the paper, titled "Automatic Meaning Discovery Using Google," and written by Rudi Cilibrasi and Paul Vitanyi, is here.

6 comments:

This is absolutely fascinating!!!! Why didn't I think of this?! It seems so obvious now.

I've written co-location code before. I'm not at all convinced this will work. I think it is like statistical concept formation. It is too "fuzzy" to work at a certain level. That's not to say it won't be useful. Most AI routines end up being useful for certain functions. But they rarely, rarely, rarely live up to the hype.

What this might be more useful for is automatic translation though. There's some exciting work on that going on.

I question whether or not we will be able to say that the computer has gained any understanding by merely associating some words with others. Its ability to sort the referents of words based on their co-occurences with other words certainly seems different than understanding. It seems that I could do just what this computer does in such a way that carries no semantic content whatsoever. Perhaps I'm missing something, but wasn't this Searle's point over twenty years ago?

Other Chris (I wish I had a more unusual name, so that going by my first name wouldn't create so much confusion), you're definitely right. Using LSA or Google scores to model meaning does nothing do solve the symbol-grounding problem (I don't want to get into intentionality, or the "Chinese Room"). Still, LSA models our use of meaning very well, at least in some contexts, and explaining how it does this might give us more insight into how humans process meaning. It might even shed some light on the symbol-grounding problem.

Ok I understand, it's more a matter of processing rather than the actual 'symbol-grounding' (which I assume means something like semantics). Thanks.

Very interesting... I wonder what relationships it would come up with if you fed it a mass collection of tags from flickr, delicious, ourmedia, etc...?

Post a Comment